Posted: 21 December 2007 Updated: Whenever

As always, this rant is my opinion and does not represent anyone's official position. I've received input from several people whose names I will not share, to protect their careers. Comments can be sent to me at cdoswell@earthlink.net, but if you're not willing to have them posted here, along with my response, don't waste our time.

Several years ago, some colleagues and I wrote a paper1 that included a discussion about the sad state of affairs with regard to the passing of Prof. Ted Fujita and its impact on the occurrence of scientific surveys after major tornado events. Following this paper, and especially in the wake of the La Plata, MD, tornado of 28 April 2002, this resulted in the formation by the National Weather Service (NWS) of the so-called Quick Response Team (QRT), whose primary goal was to ensure that tornadoes at the upper end of the Fujita Scale were rated properly. The QRT, of course, has no further responsibilities and is not a substitute for the detailed survey information that historically had been provided by Prof. Fujita's surveys.

Fujita's was not the only team doing post-storm surveys. The wind engineers at Texas Tech. University have been involved in doing post-storm surveys since the 1970s and they continue to do so, although they're primarily focused directly on a suite of issues that may not cover all aspects of a tornadic event. Some surveys have been done by the National Severe Storms Laboratory, but these generally are limited to tornadic events within their data collection domains. Occasional surveys by other institutions have been done, typically when tornadoes (or other severe weather) occur in their "backyard".

The notion of the NWS replacing post-event scientific surveys of major tornado events with a "service assessment" is consistent with the growth of a bureaucratic mentality that the NWS is not a scientific organization, but one that provides "service" and only coincidentally does that service have anything to do with science. This might be the topic for a different sort of rant altogether, but will not be dealt with here. What concerns me here is that even these "service assessments" are simply not accomplishing even that limited goal. Rather, they've become a pale shadow of the reality associated with the services provided by the NWS offices. It's simply unacceptable now that the findings of the assessment team include anything very negative about that service, to the point where important aspects of that service are ignored, overlooked, or even suppressed, should they come to light, anyway. I'll be using the "service assessment" associated with the tornadoes of 01 March 2007 in Alabama and Georgia as an example of the issues that concern me.

I don't have any interest in re-creating a detailed analysis of this event. There are some points about the final document (which can be obtained at the link I provided, above). This event came to my attention because some colleagues of mine in the NWS pointed out to me that the warnings exhibited some less than satisfactory characteristics early in the development of this case.

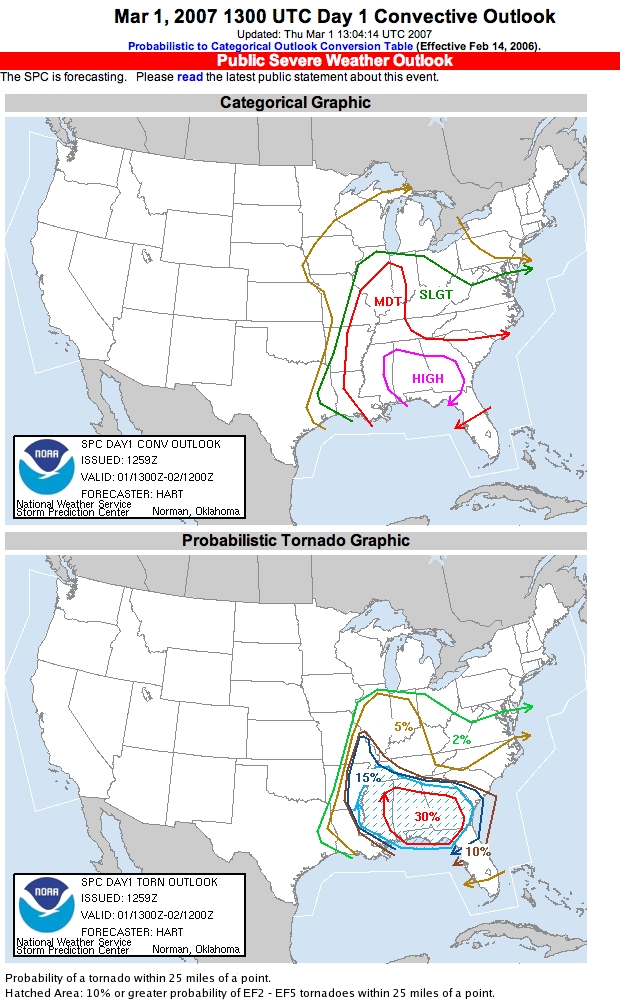

Without going into all of the details, this was a "high risk" day - here is the 1300 UTC outlook from the SPC:

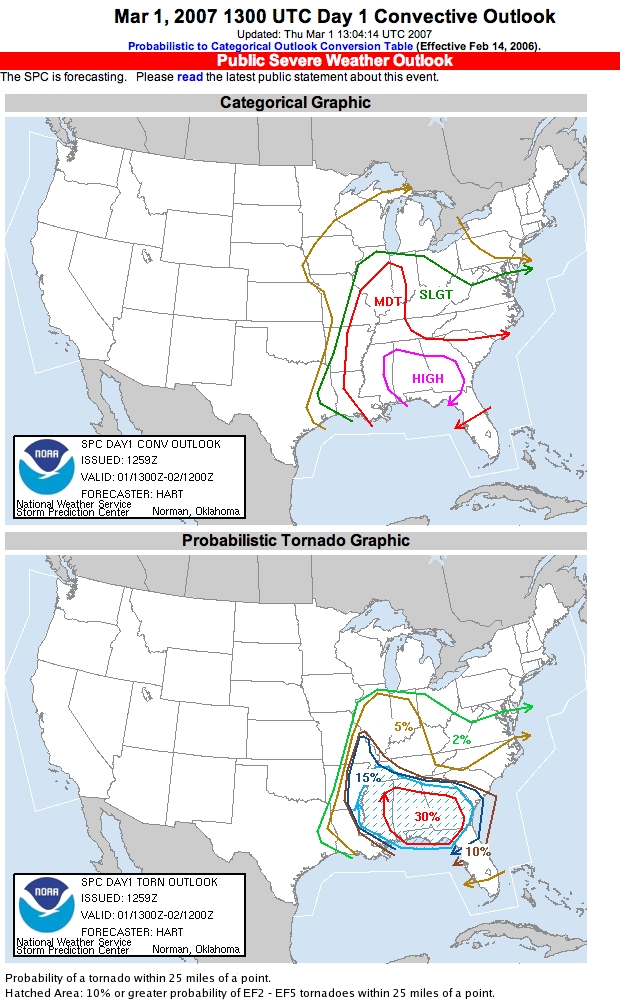

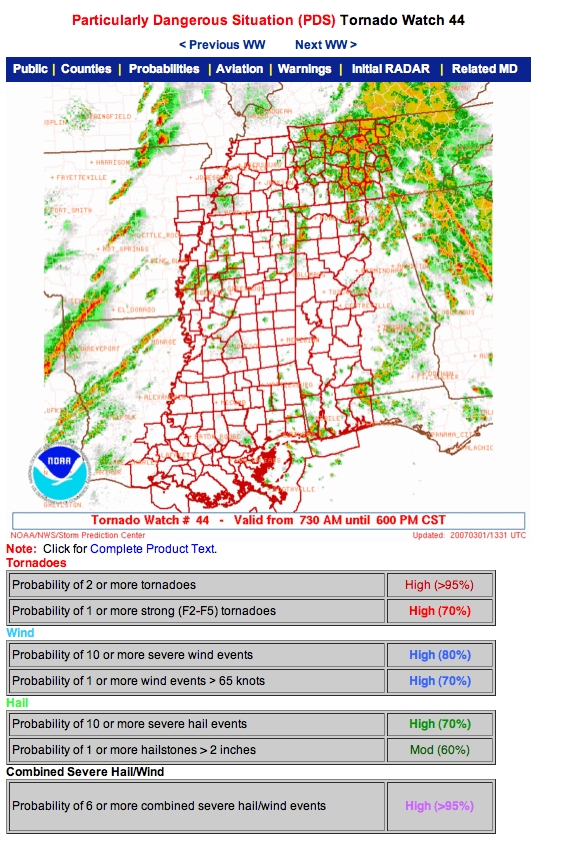

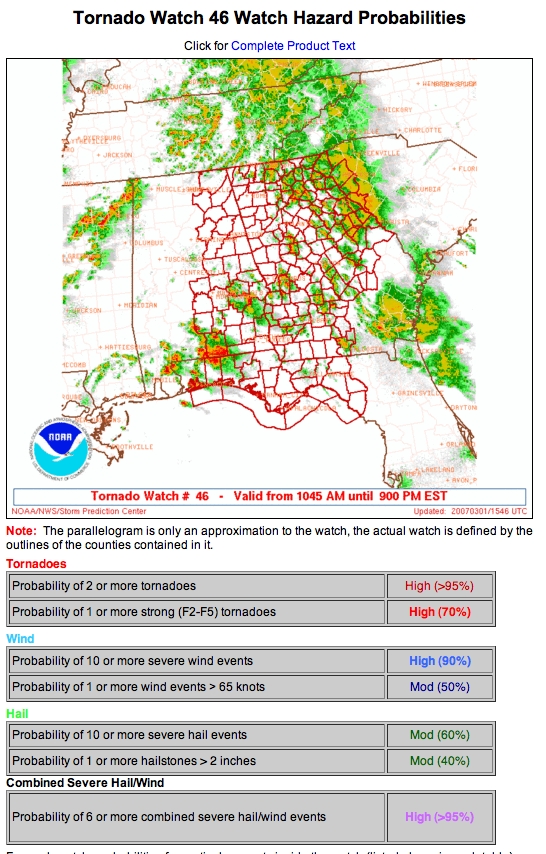

Subsequently, when storms began to develop, two PDS tornado watches were issued with high probabilities for multiple tornadoes and for strong-to-violent tornadoes:

Followed by:

No severe weather event is ever truly "ordinary" but it's evident from these forecast products that the SPC was anticipating an extraordinary day and jumped on the early developments with PDS watches. Yes, it's true that not every "high risk" Outlook followed by a "PDS" Tornado Watch verifies with an outbreak of strong-to-violent tornadoes, but nevertheless this is a situation that certainly had the potential to be a major event, in the view of the SPC forecasters.

So let's consider the warnings issued from the KMOB office. I hasten to add here that although I'm using this example to make a point, I'm not playing "Monday morning quarterback" with the particular individuals responsible for the warnings at KMOB. Rather, my main concern is with regard to the so-called "service assessment" document (see above). In its executive summary, it is asserted that "NWS offices performed well during the event. ... All tornadoes producing fatalities had Tornado Warnings issued for them with adequate lead time." Although it is indeed the case that all the fatality-producing tornadoes occurred within Tornado Warnings that had positive lead times, this is not a very adequate description of the whole picture regarding the KMOB warnings.

Consider the following series of radar images, showing the reflectivity and selected Doppler velocities, with the county warnings issued by KMOB superimposed. Warnings in red are tornado warnings. Warnings in blue are severe thunderstorm warnings.

This pair of images is 1714 UTC. There are three supercells shown to the northwest of the radar, and no warnings of any sort have been issued for any of them. These are clearly supercells in a High Risk Outlook, and a PDS Tornado Watch. Why were no warnings issued for these storms? Was there something the warning forecaster saw in the radar that led him/her to choose not to issue warnings for these storms? If so, what was the reasoning? It seems to me that if "service" is the concern for this assessment, surely someone would want to know what reasoning was behind the decision not to issue warnings by this time!

Moving ahead in time to 1723 UTC ...

Now, for the northernmost storm of the three, a tornado warning has been issued just ahead of the storm, and the two counties behind the storm have severe thunderstorm warnings. the northwesternmost county with a severe thunderstorm warning has no storm in it. Why severe thunderstorm warnings? What about this situation led the warning forecaster to issue severe thunderstorm warnings for a supercell in a PDS watch? The southernmost storm now has a severe thunderstorm warning, but the middle storm has no warning. Why a severe thunderstorm warning for the trailing storm? Why no warning for the middle storm?

Moving ahead 5 minutes ...

Now, one of the former severe thunderstorm warnings has become a tornado warning, and the last supercell in the line still has only a severe thunderstorm warning. Note that the storm in the middle still has no valid warning. What rationale lies behind these decisions? Was s/he wanting for spotters to call in reports? Was there something about the radar that caused the decisions to go this way? Apparently, no one ever questioned the warning forecaster about this. For all I can tell, no one ever examined this phase of the warnings. Why not? Well, one likely possibility is the assessment team never bothered to consider these relatively early times in the event because no fatality-producing tornadoes were on the ground from these storms at this time.

Given this situation, I'm very hard-pressed to understand why the warning forecaster was not putting out tornado warnings with these storms. I would really want to know what the warning forecaster was using to make these decisions. If s/he saw something in the radar that kept him/her from issuing tornado warnings, it would seem to be of considerable interest to anyone actually interested in assessing the service provided!

Again moving on in time to 1737 UTC,

The situation remains the same ... moving forward again to 1745 UTC...

Finally, the middle storm has a tornado warning. The last storm in the line continues with a severe thunderstorm warning, but finally, the middle storm gets a tornado warning. Again, what is driving these decisions? Moving on to 1753 UTC ...

Why only a severe thunderstorm warning for the trailing supercell? Moving on to 1758 UTC ...

Apparently, the trailing storm has some characteristic that the warning forecaster sees as profoundly against a tornado warning ... as it moves into the next county, a tornado warning turns into severe thunderstorm warning. Why?

I note that the one fatality-producing tornado from this event in the KMOB area - an EF4 at Millers Ferry, AL - was produced by the northernmost storm seen in the preceding images. It stands out in the assessment's table (Appendix A) with only a 6 minute lead time. The warnings shown with that storm were dropped after the last image above for a time, and so the tornado which (according to the report) began at 1824 could have been given a much longer lead time if it had been tornado-warned at 1714 (the first panel, above) and the tornado warning maintained for the duration. Was the warning forecaster concerned about having too long a lead time? Again, whatever the logic was for the decision-making at the time should have been determined by the assessment team and included in their findings.

I could go on, but I think this sequence makes the point that I want to make. The assessment team has not done its job, so far as I can tell. Surely there are important issues herein about the service that is provided by the NWS for the public.

It certainly is true that none of the fatality-producing tornadoes occurred without warnings from the NWS, but I'm concerned about the process by which these earlier storms were not warned for despite being well-defined, intense supercells in a PDS tornado watch. If the warning forecaster had some insight to convince him/herself that these storms matched the decisions made, I would think that this information should have been included in the assessment, so that we all could benefit from the reasoning behind their apparent success. I also know that when warnings are not issued, there's a systematic tendency to dig a lot less vigorously for storm reports.2 There may have been tornadoes but if no one was killed and no damage to structures was done, we might never know what these storms did during this time. I don't know the geography of the area, or if there were even "targets" to be hit by these storms. At the very least, a service assessment should provide compelling evidence that nothing tornadic occurred during this phase.

Since the transition from real scientific surveys of major tornado events - among the last of which was a survey done on the Plainfield, Illinois tornado of 1990, with Dr. R.A. Maddox (then Director of the National Severe Storms Laboratory) as its team leader3 - to the "service assessment" model, we have seen a steady degradation in the process of learning within the NWS from our experiences with major tornado events. A "service assessment" is a significant loss of content from a scientific survey. Although I know that some of the hardest-hitting conclusions from the Plainfield event were "toned down" from their original statements, but here is a sample of the content of that older survey made by the disaster survey team (DST):

Finding 18:

...Partly as a result of the direct operational role of senior station management, the public forecaster did not become closely involved in the decisions being made at the severe weather desk. During the severe weather episode, no single forecaster supervised, monitored, and coordinated closely the activities and decision-making process.

Finding 21:

Many of the warnings and statements issued by WSFO Chicago contained inaccurate or potentially confusing information concerning storm location, movement, its severe weather history, and counties and towns likely to lie in its path. The DST feels that these problems reflect a low state of readiness in the overall severe weather program at WSFO Chicago

Finding 29:

The DST found that there was an overall lack of coordinated, comprehensive, or integrated county-wide procedures or structures in place for preparing for, or dealing with, possible severe weather occurrences with the counties struck by the tornado.

Finding 36:

The detailed storm survey accomplished immediately after the disaster by T. Fujita and D. Stiegler provided invaluable information to the DST which was not able to begin its work at the site until the Friday afternoon following the tornado. This survey occurred only because Professor Fujita was able to obtain financial support for the work from the University of Chicago

Recommendation:

Because of the extreme importance of highly detailed documentation of severe weather occurrences to support the operational implementation of the new WSR-88D radars, the DST feels that NOAA should develop a capability for executing very rapid response storm damage surveys during the 1990s

It's pretty evident that NOAA has not paid this latter recommendation any heed. The QRT has been ineffective since its inception, for the most part, and hampered by an inexplicable lack of interest from the local and regional offices of the NWS.

Where are such hard-hitting findings in the present-day Service Assessments? They've essentially disappeared, and were rapidly on their way out immediately after the Plainfield report came out. Clearly, NWS Management didn't want substance - they wanted affirmation that the system is working. The change from "Natural Disaster Surveys" to "Service Assessments" has been a large step backward. Furthermore, by now, it seems, the "agenda" of the assessments has come to be a pre-ordained mission of providing a mostly rosy picture of the services provided by the NWS - with only a few "tweaks" needed apparently to usher in the best of all possible worlds. It seems pretty obvious that NWS Management simply won't tolerate much of a frank and open discussion of its problems when they are revealed by natural disasters.

I have "insider" information that the subject matter for the main findings of the assessment team were essentially set before the assessment team (for the 01 March 2007 case in question) even began its work. Their main mission agenda was set and any departures from that would not be looked upon favorably. My sources have shared with me an original draft of the document that contained roughly twice the meteorological content of the final version. It's not at all obvious why the scientific substance of the final assessment document had to be reduced to the extent that it was. Was there some concern about reducing the printing costs? But this is typical of an NWS management team that seems pretty much distinctly uninterested in the science of meteorology.

I also know that the mission of the assessment team included an ad hoc version of a QRT investigation - begun several days after the event, not the day after the event, as is properly should be), and that the QRT aspect of the effort effectively diluted the team's expertise at evaluating the performance of the various offices. Some of the team was preoccupied with QRT topics, not service assessment. It seems the Mobile, AL office "service" was not given a very thorough evaluation - or they would have turned up what I have shown above - and the inadequacy of the assessment team's staffing apparently contributed to this cursory assessment. The extent to which other offices were investigated is likely to be comparable to that done for KMOB - that is to say, inadequately.

Furthermore, I've learned that a number of slightly more negative findings and associated recommendations were not included in the final version of the assessment, but rather were circulated only internally. This was ostensibly because these findings and recommendations were not within the very narrow guidelines of the formal assessment - however, this sounds rather like a rationalization for excluding even these mildly critical comments by the assessment team. Yes, I've seen the document containing the additional findings and recommendations. By whose mandate were these findings excluded from the "service assessment"? Why were these not included with the final document? Just how narrow is the mandate for the assessment teams? What rationale can be used to justify a very narrow interpretation of an assessment team's mission? Ironically, the QRT aspect of the investigation resulted in findings and recommendations about how the QRT part of the investigation was handled that were excluded from the final version of the assessment, apparently on the basis that they were outside the permitted topics of the assessment!

I can understand a reluctance to make "dirty laundry" public. The relatively hard-hitting (but still toned-down from the original) Plainfield disaster survey findings and recommendations would have to be somewhat embarrassing to the NWS. But in the end, nothing substantial was done to remedy the situation in the Plainfield disaster. A few low-ranking personnel were forced into early retirement, but the systemic issues that point to problems at higher levels - at Central Region HQs and at the National HQs - were never mentioned, never addressed, and never fixed. We've reached the point where the "service assessment" process is not even allowed to ask the right questions, much less publish the answers and their recommendations. Therefore, I can guarantee that the problems within the system will not be addressed in a substantial fashion. Bureaucrats can avoid doing much even when investigations are free to point out deficiencies, but in the present situation, the NWS bureaucracy is free even from that " annoyance" and so can perpetuate the myth that the existing system is working as well as can be expected, save for a few trivial adjustments. It's as if the outcome of the Challenger investigation would have been "business as usual" and the loss of a whole team of Shuttle astronauts written off as unavoidable losses due to the risks of space travel.

Given the apparent wish of NWS management to "whitewash" any serious blemish on their "service" record, many of us, including many people within the NWS, have become rather cynical. Whatever management's intentions, the result is that important questions regarding the service provided by the NWS are going unanswered, and even unasked! When this is coupled with the "fox guarding the chickenhouse" syndrome, a necessary condition for the improvement of services is no longer present. If any serious assessment of NWS service is to be accomplished, it simply cannot be done entirely by those within the agency. A multi-agency independent investigation team (including diverse interested participants with the scientific qualifications to do the job correctly and including non-NOAA team members) is needed - people without any personal interest in the findings of the investigation and with no reason to be concerned about reporting on unfavorable findings. Until this deficiency is fixed, there can be no substantive assessment of service done, to say nothing of pursuing important scientific issues in the meteorology that we presume to underlie the whole process of weather forecasting and warning decision-making.

Imagine the 1986 Challenger space shuttle disaster investigation done only by NASA bureaucrats and their hand-picked NASA employees. Without Prof. Richard Feynman's input on the Challenger investigation, what would have been the findings of that investigation? Would the NASA bureaucrats been willing to reveal the true reasons for that terrible "accident" in the absence of anyone on the investigative team from the outside? I'm dubious. I think I know how bureaucracies work. And this is how the NWS is doing their "service assessments" now, having moved away from their "Natural disaster survey" approach in the past. Having only NWS people or their carefully-chosen employees involved in the investigations certainly has the appearance of a whitewash, however well-intentioned the team members might be.

It appears to me that many warning forecasters in the NWS are trying to reduce the number of false alarms by holding off issuing tornado warnings until absolutely convinced that tornadoes are imminent. This approach is strongly the result of management pressure to improve their verification numbers. There are now warning verification statistical "goals" that have been set by NWS management for the accuracy of tornado warnings. This sort of mandated "numbers game" explicitly encourages forecasters to "play the numbers" rather than to provide service to the public. But is this case the sort of situation (recall, a High Risk Outlook and a PDS tornado watch) where warning forecasters should be seeking aggressively to reduce their false alarms? As I see it, in this case, waiting to the last minute to "pull the trigger" on tornado warnings seems an extraordinarily foolish strategy if the goal is to serve the public. The term "situation awareness" has become popular in some circles within the NWS, but it's apparently the case that the KMOB warning forecaster on this day was pretty far from aware of the situation.4 If there's some general desire to reduce false alarms, let that occur in much less threatening meteorological situations, where many offices now seek to play a CYA game with warnings for marginal storm events in situation with little obvious reason to anticipate significant events. Given fast-moving supercells in this kind of environment, it's quite possible that a number of false alarms might occur before tornadoes begin to occur, but the larger issue of providing enough lead time for people to act far outweighs some dubious "numbers game" with verification statistics. That game could be the subject of another whole rant, but I'll resist the temptation for the moment. The false alarms eroding public credibility for NWS warnings are not associated with cases involving intensifying supercells within a PDS tornado watch!

Any evaluation of the service provided by the NWS warning forecasters should be done in the context of the meteorological setting. However, it seems apparent that a real service assessment is not what is being done by these teams, with high-level NWS management bearing the brunt of the responsibility for this deficiency, in my view of it. I find it appalling that we've sunk so low that a real service assessment is being done in name only, not in substance. This is a very depressing trend that shows little sign of changing. So long as NOAA and NWS management are mandating what can and can't be considered, there's little hope for improvement.

1 Speheger, D.A., C.A. Doswell III, and G.J. Stumpf, 2002: The tornadoes of 3 May 1999: Event verification in central Oklahoma and related issues. Wea. Forecasting. 17, 362-381.

2 As I've discussed here, it can be considered a case of "the fox guarding the chickenhouse"when the people doing the verification of the warnings are the same as those issuing the warnings. Although many (probably most) NWS warning forecasters resist the temptation to go down this road, the evidence is overwhelming that enough of them do so to affect the statistics. Warning verification has, unfortunately, become a "game" that sacrifices the value of the warnings in some situations.

3 NOAA, 1991: The Plainfield/Crest Hill tornado, northern Illinois, August 28, 1990. U.S. Department of Commerce Natural Disaster Survey Rep., 96 pp.

4 If there was compelling evidence to the contrary, then that evidence should have been included in the assessment.